When the Daylight saving time starts, and the computer’s clock jumps forward, what does cron do? If the clock jumps from 1AM to 2AM, and there is a job scheduled for 1:30AM, will cron run this job? If yes, when? Likewise, when the Daylight saving time ends, and the clock jumps backward, will cron possibly run the same scheduled job twice?

Let’s look at what “man cron” says. On my Debian-based system, under the “Notes” section:

Special considerations exist when the clock is changed by less than 3 hours, for example at the beginning and end of daylight savings time. If the time has moved forwards, those jobs which would have run in the time that was skipped will be run soon after the change. Conversely, if the time has moved backwards by less than 3 hours, those jobs that fall into the repeated time will not be re-run.

Only jobs that run at a particular time (not specified as @hourly, nor with ‘*’ in the hour or minute specifier) are affected. Jobs which are specified with wildcards are run based on the new time immediately.

Clock changes of more than 3 hours are considered to be corrections to the clock, and the new time is used immediately.

After a fair bit of experimenting, I can say the above is mostly accurate. But it takes some explaining, at least it did for me. Debian cron distinguishes between “fixed-time jobs” and “wildcard jobs”, and handles them differently when the clock jumps forward or backward.

Wildcard jobs. Here’s a specific example: */10 * * * *, or, in human words, “every 10 minutes”. Debian cron will try to maintain even 10-minute intervals between each execution.

Fixed-time jobs. Now consider 30 1 * * *, or “run at 1:30AM every day”. Here, the special DST handling logic will kick in. If the clock jumps an hour forward from 1AM to 2AM, Debian cron will execute the job at 2AM. And, if the clock jumps from 2AM to 1AM, Debian cron will not run the job again at the second 1:30AM.

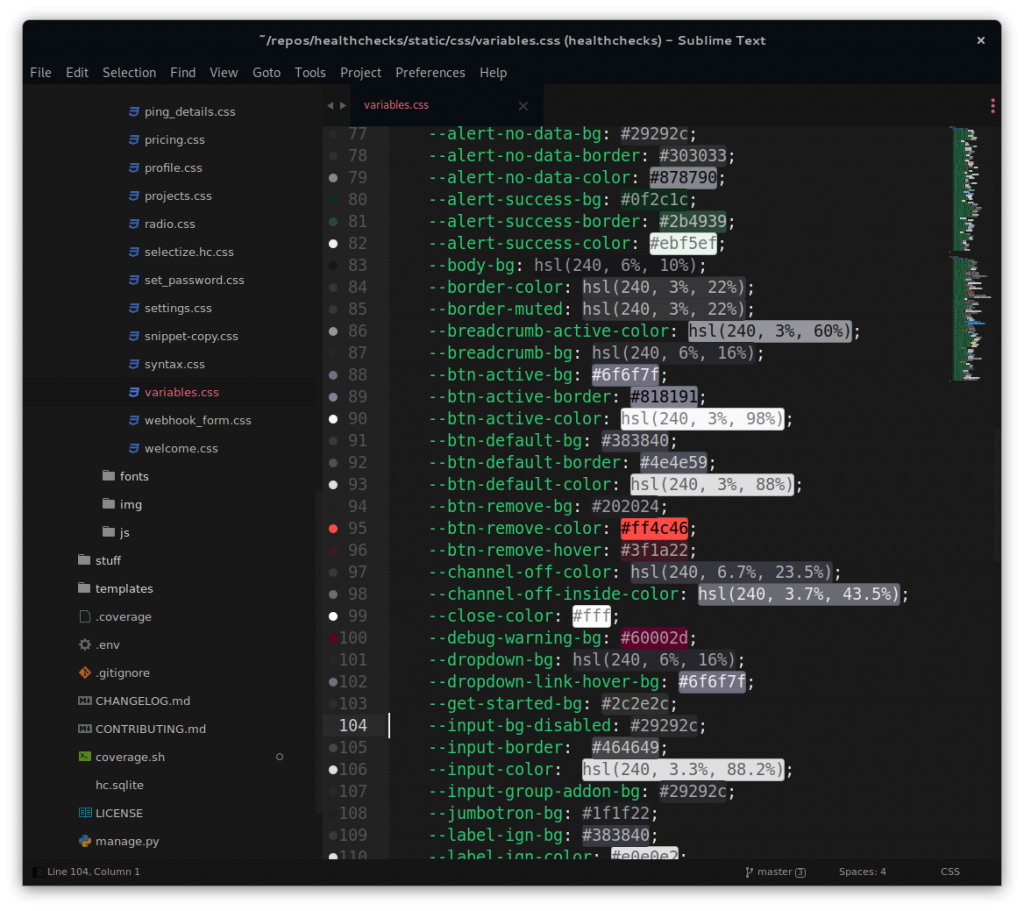

What are the precise rules for distinguishing between wildcard jobs and fixed-time jobs? Let’s look at the source code!

Source

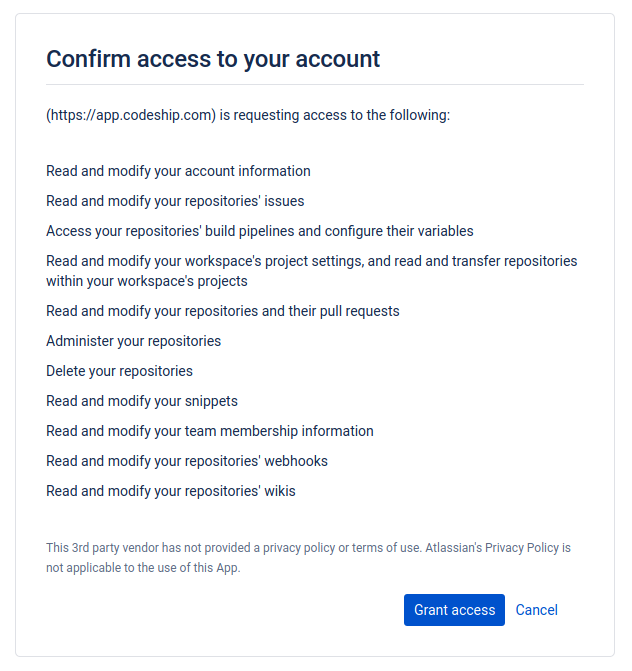

Debian cron is based on Vixie cron, but it adds Debian-specific feature and bugfix patches on top. The special DST handling logic is one such patch. I found Debian cron source code at salsa.debian.org/debian/cron/. Here is the DST patch: Better-timeskip-handling.patch.

Unless you are already familiar with cron source, to understand the patch, you would want to see it in context. We can apply Debian patches in the correct order using the quilt tool:

$ git clone https://salsa.debian.org/debian/cron.git

$ cd cron

$ QUILT_PATCHES=debian/patches quilt push -aNow we can read through entry.c and cron.c and learn how they work. My C skills are somewhere at the FizzBuzz level so this is a little tricky. Anyway, it looks like cron parses the expression one character at a time. At every step, it knows how far into the expression it is, whether it is parsing a number, a range, a symbolic month reference, and so on. If the first character of the minute or the hour specifier is the wildcard, it sets the MIN_STAR or HR_STAR flags. It later uses these flags to decide whether to use the special DST handling logic.

Here’s what this means for specific examples:

* 1 * * *(every minute from 1:00 to 1:59) is a wildcard expression because the minute specifier is “*”.15 * * * *(at 15 minutes past every hour) is a wildcard expression because the hour specifier is “*”.15 */2 * * *(at 0:15, 2:15, 4:15, …) is also a wildcard expression because the hour specifier starts with “*”.0-59 1 * * *(every minute from 1:00 to 1:59) is not a wildcard expression because neither the minute specifier nor the hour specifier starts with “*”.

Quite interesting! But I am not a C compiler (gasp!), and my interpretation may very well be wrong. Let’s test this experimentally, by actually running Debian cron. And, since we are impatient, let’s speed up time using QEMU magic.

QEMU Magic

I followed these instructions to install Debian in QEMU. I then launched QEMU with the following parameters:

$ qemu-system-x86_64 -nographic -m 8G -hda test.img -icount shift=0,align=off,sleep=off -rtc base=2021-03-27,clock=vmThe -icount (instruction counter) parameter is the main hero here. By setting align=off,sleep=off we allow the emulated system’s clock to run faster than real-time – as fast as the host CPU can manage. We can also tweak the shift parameter for even faster time travel (read QEMU man page for more on this).

Inside the emulated system, I set the system timezone to “Europe/Dublin”, and added my test entries in root’s crontab. I tested many different expressions, but let’s look at the following two – the first one is a wildcard job, and the second one is a fixed-time job right in the middle of the DST transition window for Europe/Dublin:

$ crontab -l

30 * * * * logger -t experiment1 `date -Iseconds`

30 1 * * * logger -t experiment2 `date -Iseconds`For the “Europe/Dublin” timezone, the year 2021, the daylight saving time started on March 28, 1AM. The clock moved 1 hour forward. Let’s see how Debian cron handles it:

$ journalctl --since "2021-03-27" -t experiment1

[...]

Mar 27 23:30:01 debian experiment1[1016]: 2021-03-27T23:30:01+00:00

Mar 28 00:30:01 debian experiment1[3456]: 2021-03-28T00:30:01+00:00

Mar 28 02:30:01 debian experiment1[3866]: 2021-03-28T02:30:01+01:00

Mar 28 03:30:01 debian experiment1[3887]: 2021-03-28T03:30:01+01:00

[...]We can see the wildcard job ran 30 minutes past every hour, but the entry for 1:30 is missing. This is because this time “doesn’t exist”, the local time skipped from 00:59 directly to 02:00. Now let’s look at the fixed-time job:

$ journalctl --since "2021-03-27" -t experiment2

Mar 27 01:30:01 debian experiment2[366]: 2021-03-27T01:30:01+00:00

Mar 28 02:00:01 debian experiment2[3849]: 2021-03-28T02:00:01+01:00

Mar 29 01:30:01 debian experiment2[4551]: 2021-03-29T01:30:01+01:00

[...]On March 28, the job was scheduled to run at 01:30, but instead, it was run at 02:00. This is Debian cron’s special DST handling in action: “If the time has moved forwards, those jobs which would have run in the time that was skipped will be run soon after the change.“

Now let’s look at October 2021. For the “Europe/Dublin” timezone, the daylight saving time ends on October 31, 2AM. The clock is moved 1 hour back.

$ journalctl --since "2021-10-30" -t experiment1

[...]

Oct 31 00:30:01 debian experiment1[1166]: 2021-10-31T00:30:01+01:00

Oct 31 01:30:01 debian experiment1[1191]: 2021-10-31T01:30:01+01:00

Oct 31 01:30:01 debian experiment1[1212]: 2021-10-31T01:30:01+00:00

Oct 31 02:30:01 debian experiment1[1233]: 2021-10-31T02:30:01+00:00

[...]In this one, it appears as if the wildcard job ran twice at 1:30. But, if you look closely at the ISO8601 timestamp, you can see the timezone offsets are different. The first run was before the DST transition, then the clock moved 1 hour back, and the second run happened an hour later. Debian cron maintains a regular cadence for wildcard jobs (60 minutes for this job). Now, the fixed-time job:

$ journalctl --since "2021-10-30" -t experiment2

Oct 30 01:30:01 debian experiment2[444]: 2021-10-30T01:30:01+01:00

Oct 31 01:30:01 debian experiment2[1192]: 2021-10-31T01:30:01+01:00

Nov 01 01:30:01 debian experiment2[1950]: 2021-11-01T01:30:01+00:00

[...]The fixed-time job was executed once at 01:30 but was not run again an hour later. This is again thanks to the special DST handling: “if the time has moved backwards by less than 3 hours, those jobs that fall into the repeated time will not be re-run“.

Let’s also check if Debian cron treats 0-59 1 * * * as a wildcard or a fixed-time job.

$ crontab -l

0-59 1 * * * logger -t experiment3 `date -Iseconds`

$ journalctl --since "2021-03-27" -t experiment3

[...]

Mar 27 01:57:01 debian experiment3[598]: 2021-03-27T01:57:01+00:00

Mar 27 01:58:01 debian experiment3[602]: 2021-03-27T01:58:01+00:00

Mar 27 01:59:01 debian experiment3[606]: 2021-03-27T01:59:01+00:00

Mar 28 02:00:01 debian experiment3[1218]: 2021-03-28T02:00:01+01:00

Mar 28 02:00:01 debian experiment3[1222]: 2021-03-28T02:00:01+01:00

Mar 28 02:00:01 debian experiment3[1226]: 2021-03-28T02:00:01+01:00

[...]On March 27, the job ran at minute intervals, but on March 28 the runs are all bunched up at 02:00. In other words, Debian cron treated this as a fixed-time job and applied the special handling.

I’ve found the QEMU setup to be a handy tool for checking assumptions and hypotheses about cron’s behavior. Thanks to the accelerated clock, experiments take minutes or hours, not days or weeks.

Who Cares, and Closing Notes

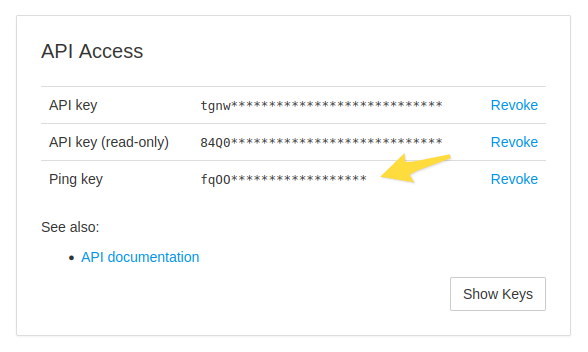

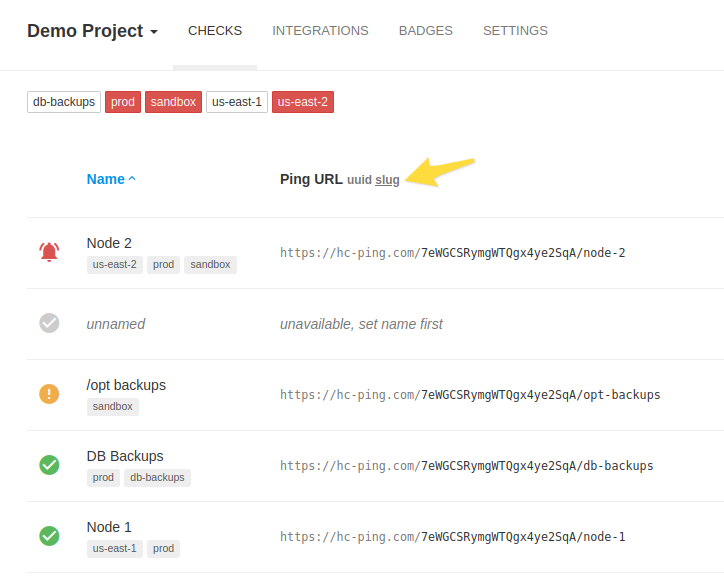

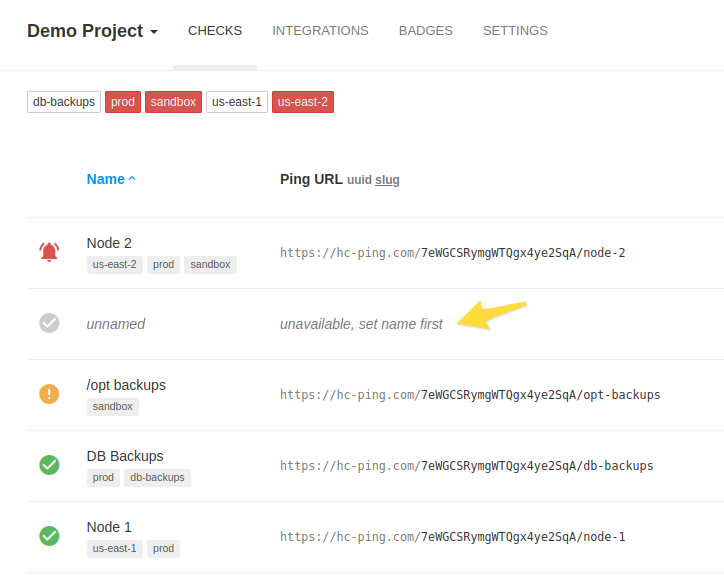

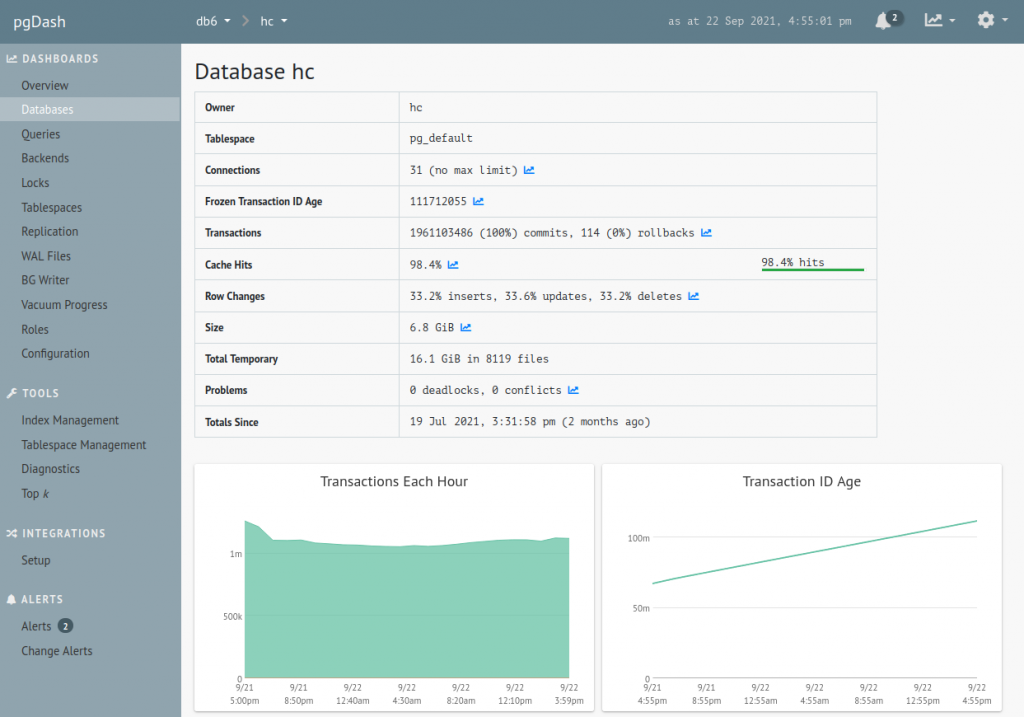

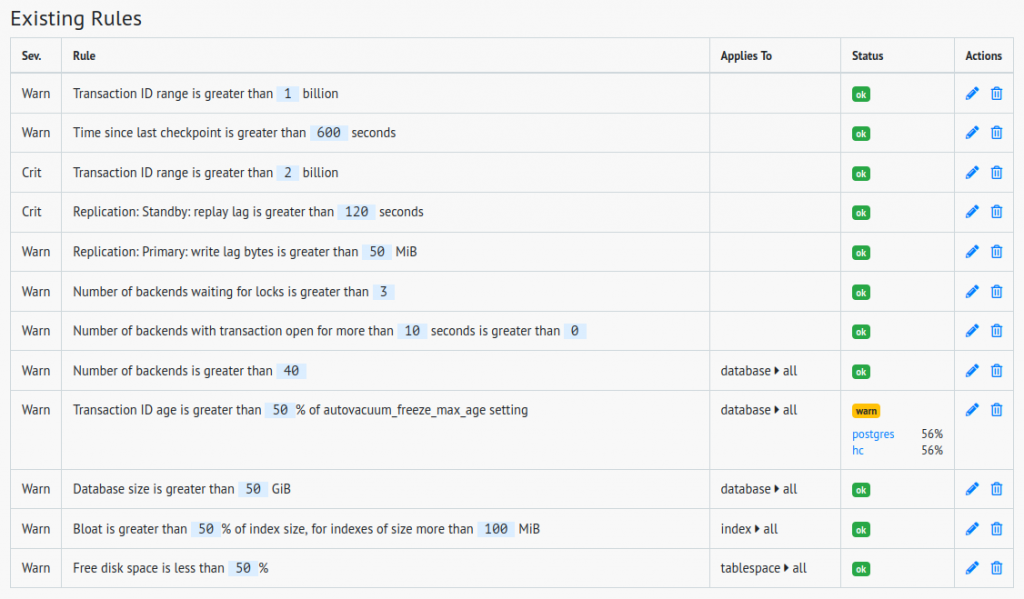

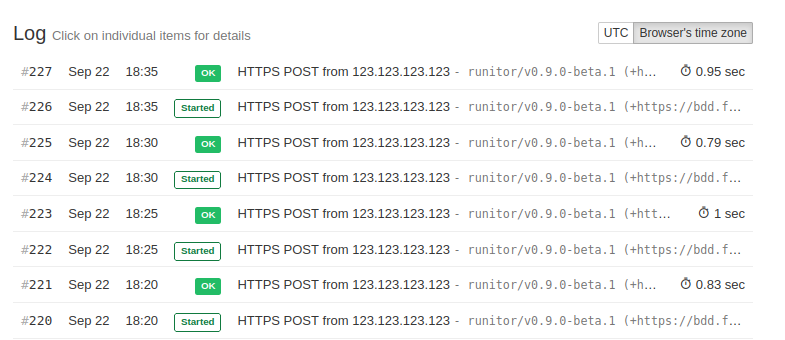

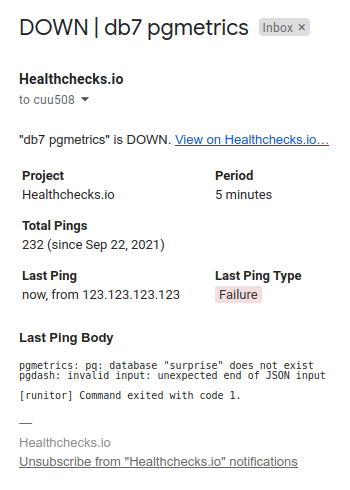

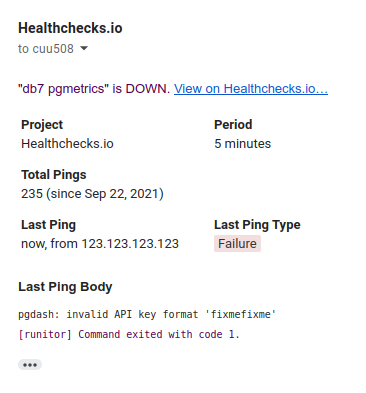

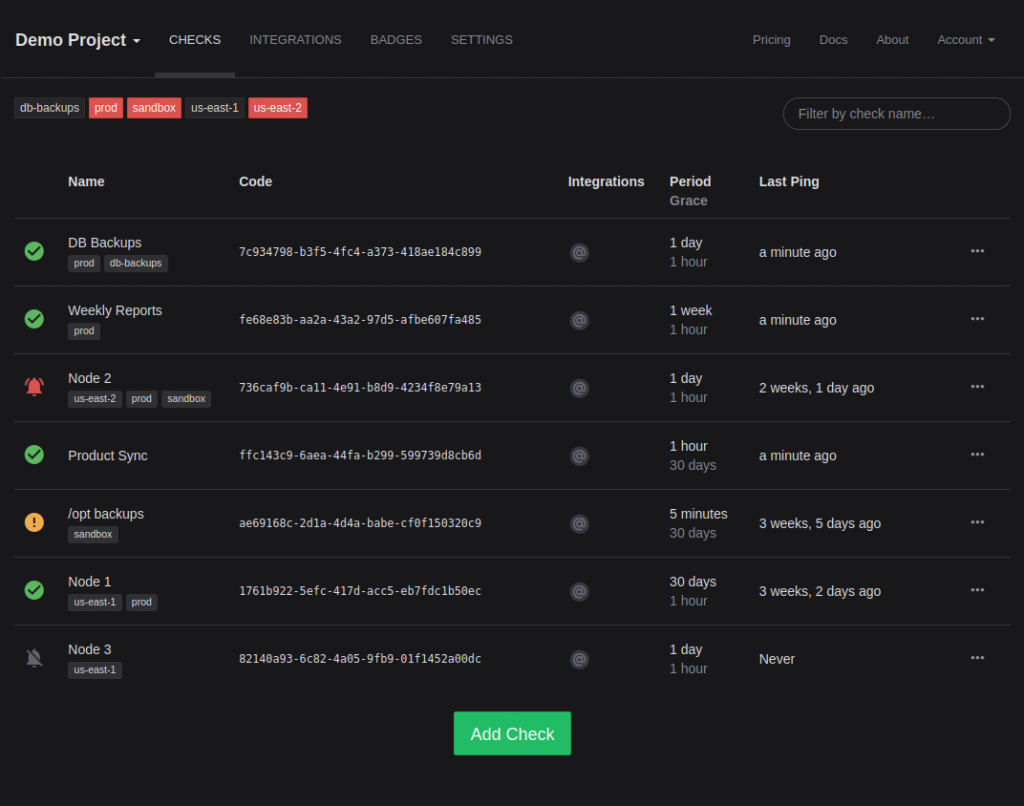

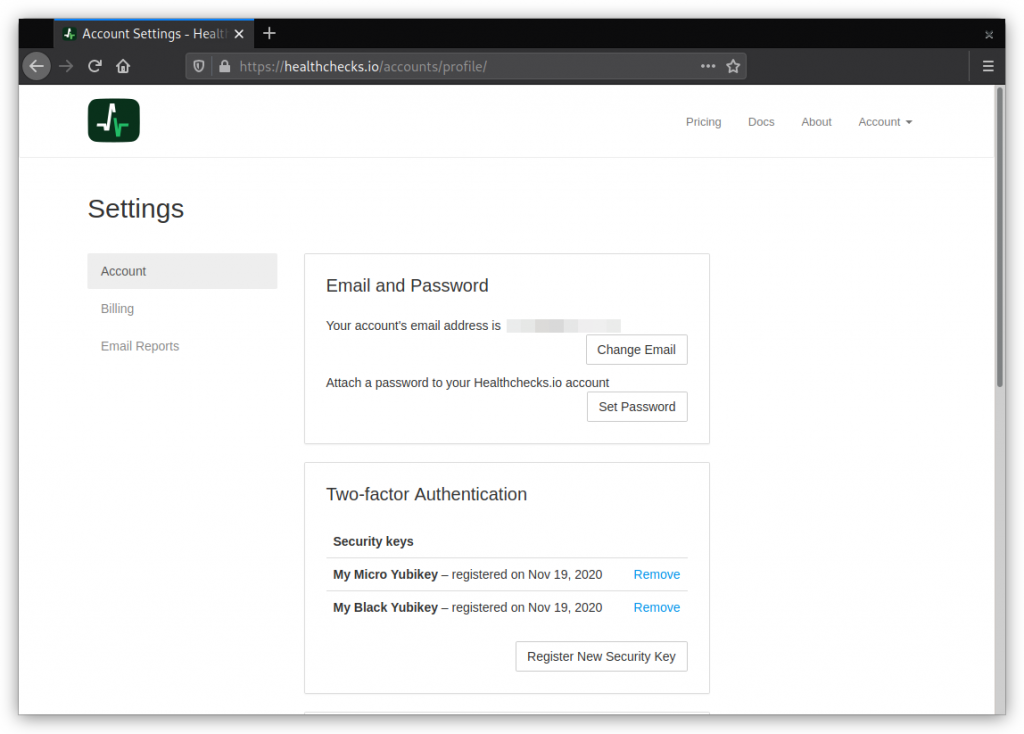

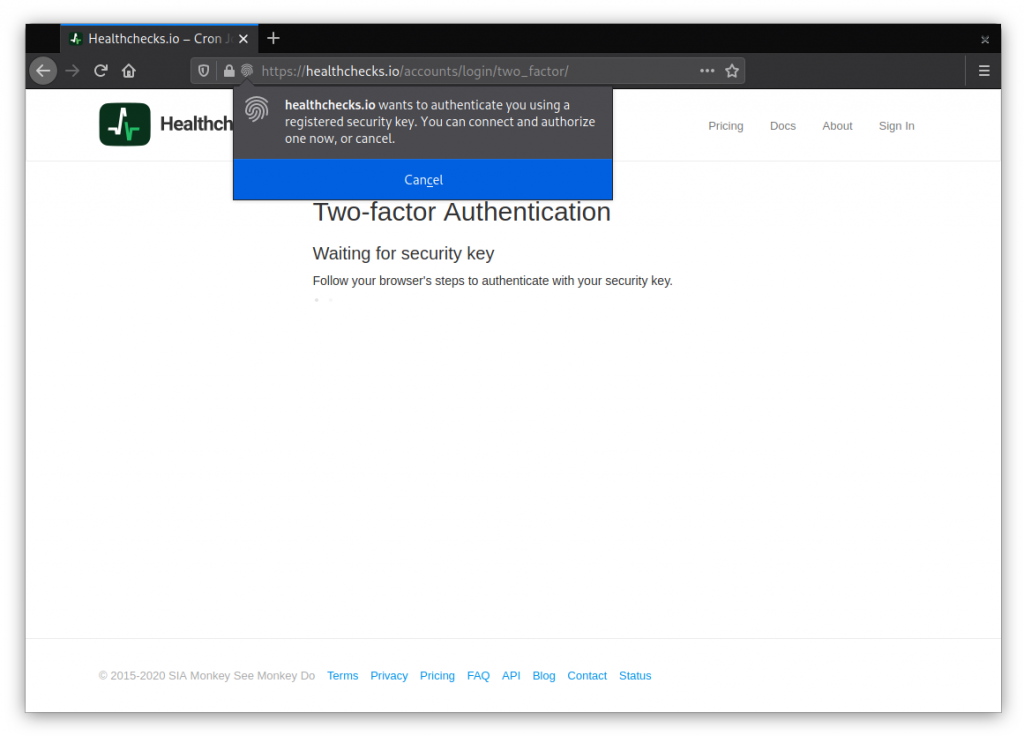

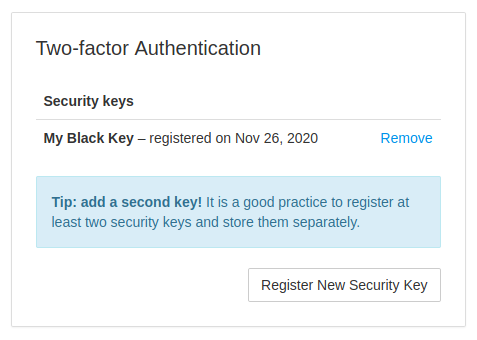

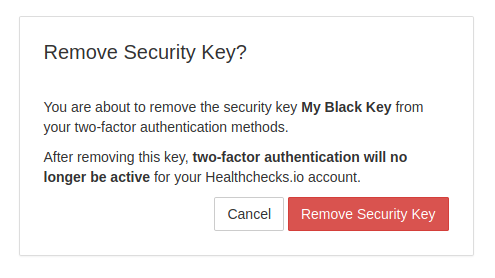

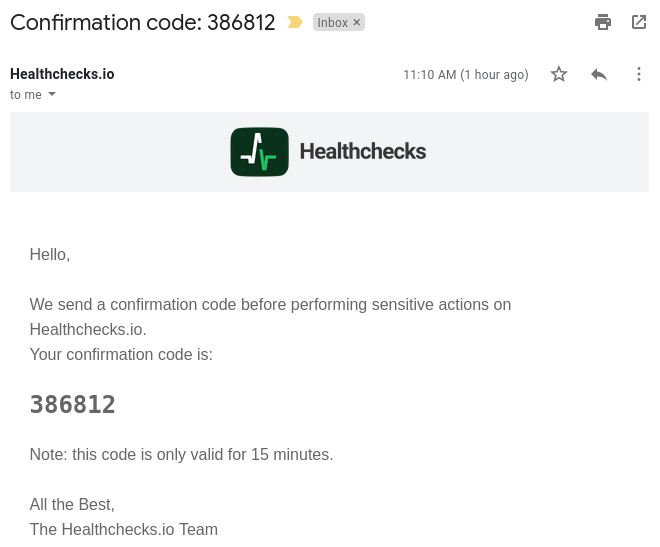

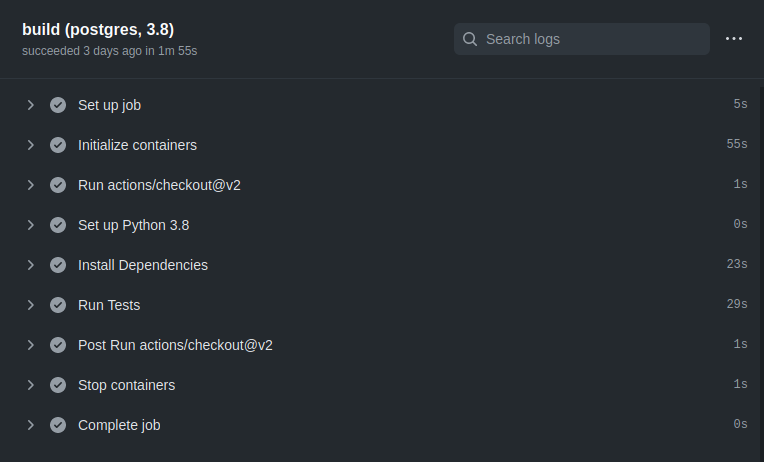

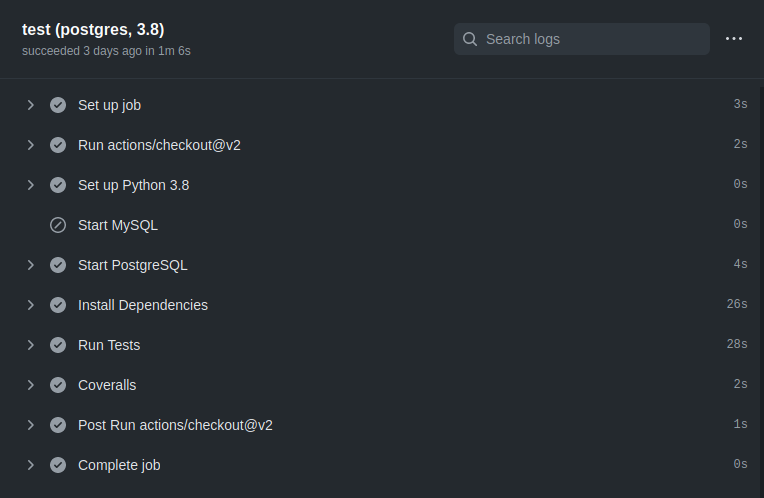

Who cares about all this? Well – I do! Healthchecks.io is a cron job monitoring service, its cron handling logic needs to be as robust and correct as possible.

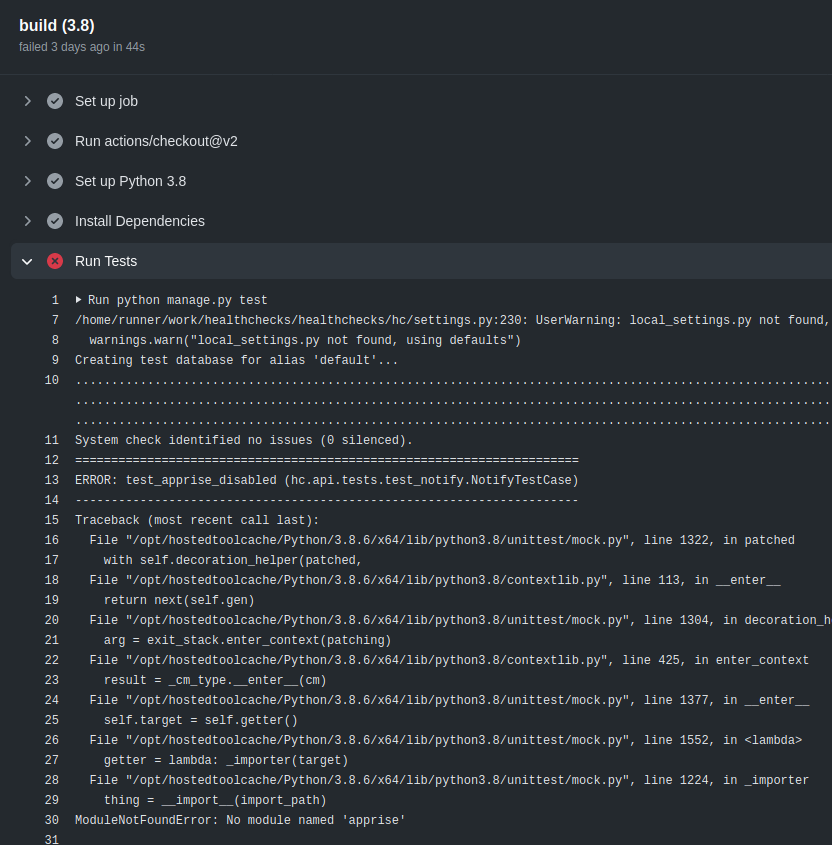

Like many other Python projects, Healthchecks used croniter for handling cron expressions. It did not seem viable to fix DST handling bugs in croniter, so I started a separate library, cronsim. It is smaller, quicker, and tested against Debian cron with 5000+ different cron expressions.

Ah, but why target Debian cron and not some other cron implementation? To be honest, primarily because I happen to use Ubuntu (a Debian derivative) on all my systems. I also suspect Debian and its derivatives together have a large if not the largest server OS market share, so it is a reasonable target.

One final note: there is a simple alternative to dealing with the DST complexity. Use UTC on your servers!

That’s all for now, thanks for reading!