Time flies and Healthchecks.io is already 6 years old. Here’s a quick review of notable recent events and the project’s current state.

Database Migration

Healthchecks.io database used to run PostgreSQL 10. In March 2021 I migrated it to PostgreSQL 13. For the upgrade method, I used logical replication, as suggested on Reddit.

The idea is to set up a Postgres 13 replica, replicate the data to it, and then failover to it. But there are of course several gotchas and everything has to be thoroughly tested before. I found this guide and worked through it. I made a step-by-step migration plan and tested it on Vagrant VMs. I then iteratively improved the plan and did more test migrations until everything was working smoothly, and I knew the order of commands to run almost by heart.

Then it was time to announce maintenance, provision new hardware (two Ryzen 5950X machines: 16 cores, 64GB RAM, and 2x4TB NVMe drives for each, aw yiss), set them up, and do the migration for real. And it all worked as planned!

Wireguard

Hetzner has a feature called vSwitch for setting up private networks between hosts. I had it set up, and the infrastructure servers (load balancers, app servers, databases) were communicating between themselves over internal IPs.

In my experience, vSwitch turned out to be less reliable than the regular network. There was an incident where the vSwitch network interface on one machine was not working while the public interface was still fine. The issue got resolved after contacting Hetzner support, but I decided to go back to using public interfaces. I used firewall rules to control which IPs can connect to which ports.

Although Hetzner support says their internal network is secure, and customers cannot snoop on other customer traffic, I wanted to reduce the trust placed on Hetzner, and set up Wireguard tunnels between the servers. I did not use Tailscale or anything fancy like that, just a few Fabric recipes for initial setup, and for updating peers (when a server is added or removed from the network).

A small gotcha here was services not always automatically starting after system reboot. I had to tweak systemd service definitions to make sure network-dependent services (nginx, postgres) start only after Wireguard has initialized.

Self-hosted Postgres, bespoke Wireguard tunnels, can you hear the innovation tokens burning up yet? 🙂

Signal

Healthchecks.io has had a Signal integration for a couple months now. I think Signal has been the most tricky to implement and set up so far. Unlike most other services, Signal does not have a public HTTP API you can call to send messages. Instead, you have to run a local Signal client locally and communicate with it to send the messages. Luckily there is signal-cli, a wrapper around the official Signal Java client library. I run signal-cli under a separate OS user account, and Healthchecks communicates with it over DBus (details). Multiple app servers are sending out notifications, each one runs signal-cli, and all signal-cli instances are linked to a single Signal account (phone number).

After deploying and announcing the Signal integration, I was glad to see a quick uptake:

- SMS was introduced in July 2017, and has approx. 500 configured integrations

- WhatsApp was introduced in July 2019, and has approx. 450 configured integrations

- Signal was introduced in January 2021, and has approx. 350 configured integrations

When looking at these numbers, one factor to keep in mind is that SMS and WhatsApp have a minimal sending quota in free accounts (because sending these notifications costs money), while Signal is unrestricted.

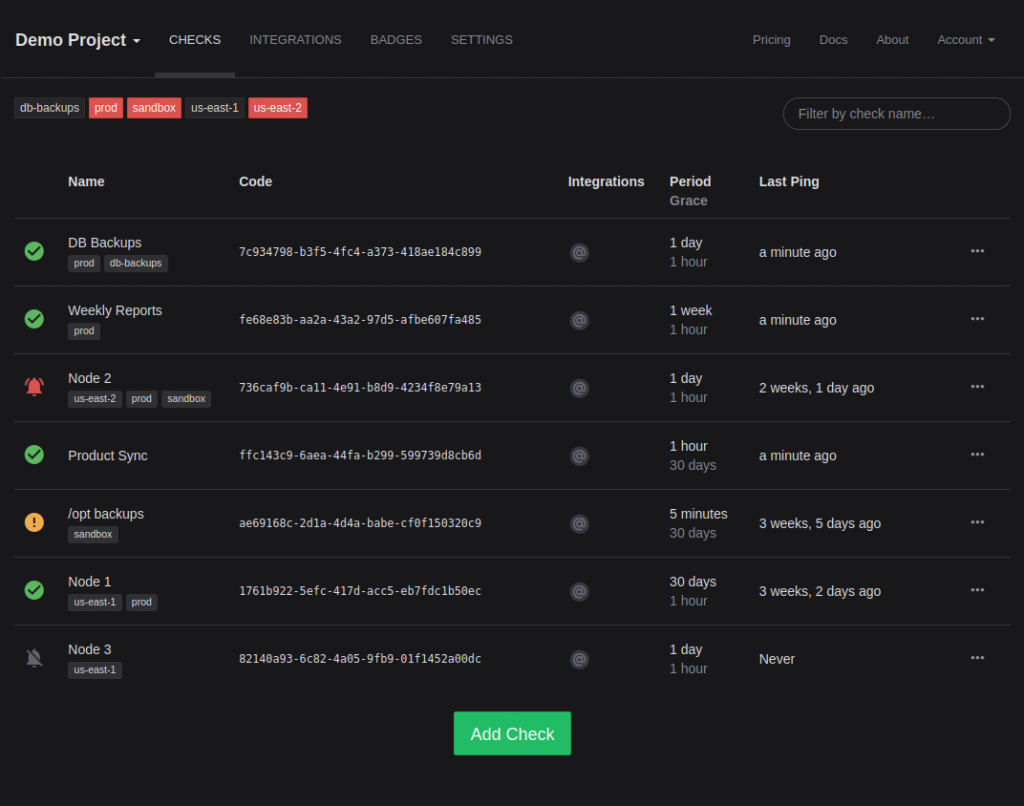

Dark Mode

Healthchecks now has an optional dark theme. You can activate it in Account Settings – Appearance.

Implementing dark mode was, as expected, lots of work, and there is more work left. Aside from the obvious – page background, body text, panels, buttons – various other bits needed theming, each in their specific way:

- Bootstrap components like menus

- Selectize dropdowns

- Period and Grace sliders

- The icon font with integration logos

- Syntax highlighting for code samples

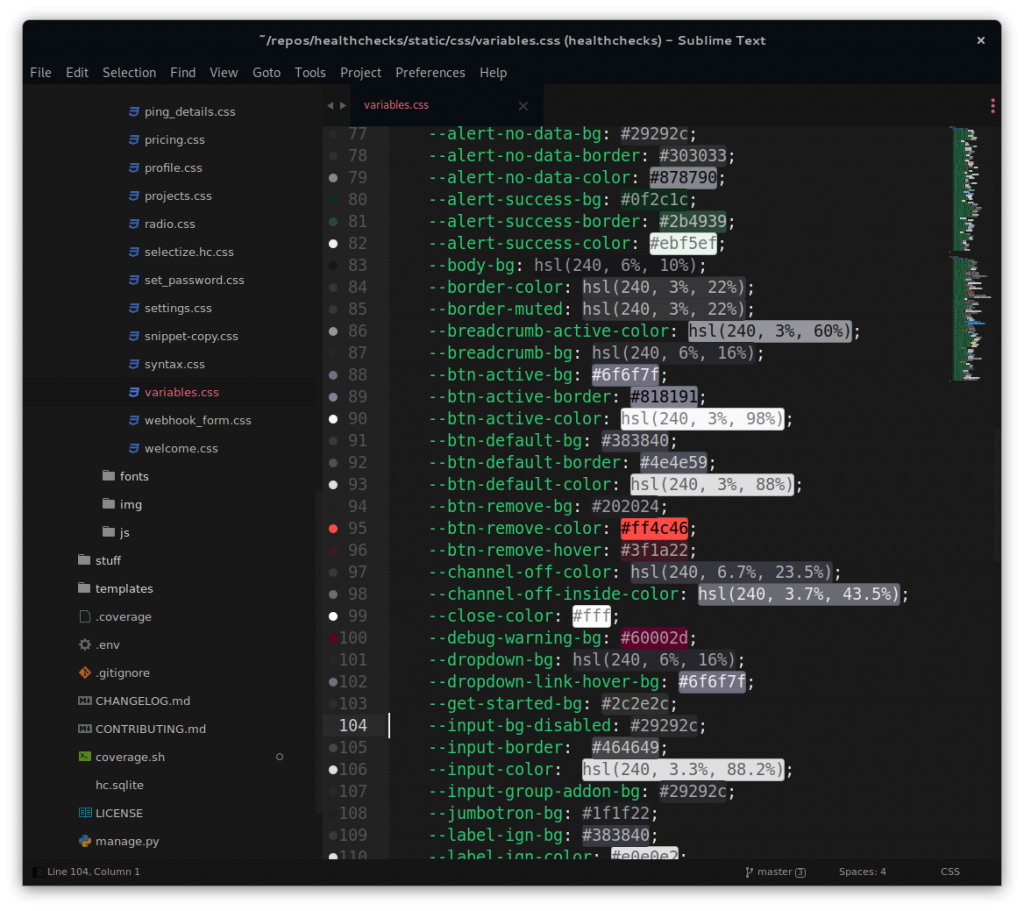

It was interesting work. I use Sublime Text, and found the Color Highlighter plugin very handy when working with colors:

After publishing the initial dark mode implementation, I was happy to see people starting to use it. It was not work-for-nothing, a significant number of users prefer the dark mode over the default!

Fuzz Testing croniter, Introducing cronsim

Healthchecks.io had an incident where a single bad cron expression caused system-wide issues. The bad cron expression was making the croniter library throw an unexpected exception. This lead to a crash-restart loop in the notification sending process. The initial fix was to add “try .. except” around croniter calls, but I later also spent time fuzz testing croniter. I found and filed several crashing issues. The worst one was to do with expressions like: 0-1000000000 * * * *. By varying the number of zeroes I could get the python process to use up all system memory and eventually crash. I reported this issue privately in January 2021, and the maintainer fixed it the same day.

After diving around the croniter code, I wanted to try my hand at writing a slimmed-down version. And so I did, welcome cronsim. It is 250 lines of code, and it does just one thing: it takes a cron expression and returns a datetime iterator.

I’ve tested cronsim with a large corpus of cron expressions, and, for every expression I tested, it produced the same results as the croniter library. Except for one case, where both libraries produce incorrect results: the handling of daylight saving time (DST) transitions. Getting this right has been surprisingly hard, and I have not cracked this problem yet. But I did come up with a cool toy: I installed a Debian system inside qemu (instructions) and used qemu emulator flags to speed up the system clock inside the VM. With this contraption, I can test cron expressions with the actual running Debian cron daemon, and see results in minutes instead of hours or days. Anyway, more work is needed here.

Development Roadmap

The default plan is to continue making small iterative improvements.

In the background, I am also bouncing around ideas around product architecture and reliability. One area is the reliability of the Ping API. Whenever a client makes an HTTP request to a ping endpoint, there is a small, but non-zero probability the request will fail due to TCP packet loss. The probability increases as the distance from the client to the server increases. It would be ideal to put the server close to the client. There are different ways to go about this and lots to explore. One potential building block is CockroachDB. Very impressively, in my testing Healthchecks test suite passed with CockroachDB backend out of the box. It Just Worked, but to make it perform well, I would need to make several changes. For example, the big and write-heavy “api_ping” table has an auto-incrementing integer primary key. It would not work well in a distributed database.

Healthchecks.io the Business

I’ve reduced my other work commitments, and Healthchecks.io is now my main occupation and my main source of income. Not quite “full-time” yet, but getting there!

I regularly update the About page with running stats (ping volume, the number of users, revenue, …), you can check out the numbers there!

As the project’s revenue slowly creeps up, I start to get more regular “Acquisition?” emails. I don’t have plans to sell the project in the foreseeable future. Too much work and soul put into it, and I also simply enjoy working on it and running it (aside from dealing with infra outages I have no control over, these are not fun at all!).

That’s it for now, thank you for reading! Here’s to another 6 years, and in the closing here’s a complimentary picture of me attacking a hornet nest with a pressure washer:

Happy monitoring,

Pēteris,

Healthchecks.io